Give your AI a brain that understands your code.

Claude, Cursor, Copilot — they read files. Graqle gives them a knowledge graph of your entire architecture. 500 tokens instead of 50,000. Real answers instead of guesses.

Autonomous fix-test-fix loops. Visual graph traversal. Real-time streaming.

10 seconds to install · No account needed · Runs on your machine

How it works

Install. Ask. Ship.

No configuration files. No onboarding calls. No cloud accounts. Graqle understands your codebase automatically and starts answering questions immediately.

One command. Your AI levels up.

No config files. No cloud accounts. No setup meetings. Run graq init and your AI assistant instantly understands your entire architecture — every service, every dependency, every connection. It takes 10 seconds.

$ pip install graqle && graq init ✓ Scanned 847 files in 4.2s ✓ Built graph: 312 nodes, 178 edges ✓ MCP tools wired into Claude/Cursor ✓ Your AI is now architecture-aware

Ask anything. Get real answers.

Your AI stops guessing. Instead of reading 60 files and hoping for the best, it queries a knowledge graph that actually knows the relationships. 500 tokens instead of 50,000. Precise answers with confidence scores.

$ graq reason "what breaks if I change auth?" 3 services impacted: → billing-api (JWT validation) → notifications (user context) → user-api (direct dependency) Confidence: 94% · 500 tokens · $0.0003

It gets smarter every day.

Every query teaches it. Every correction sticks. Every decision is remembered. This isn't a static analysis tool — it's a self-learning knowledge graph that compounds value the longer you use it. Your AI assistant evolves with your code.

$ graq learn "Payments migrated to Stripe" $ graq compile ✓ Graph: 314 nodes, 182 edges ✓ Intelligence compiled: 135 insights ✓ CLAUDE.md updated automatically ✓ Your AI just got smarter

The vibe coding wall

Your AI is fast. But is it right?

Vibe coding is incredible — until your AI breaks something it didn't understand. It reads files one at a time, has no idea what connects to what, and burns 50K tokens to give you a guess. The answer isn't a smarter model. It's giving your model the right context.

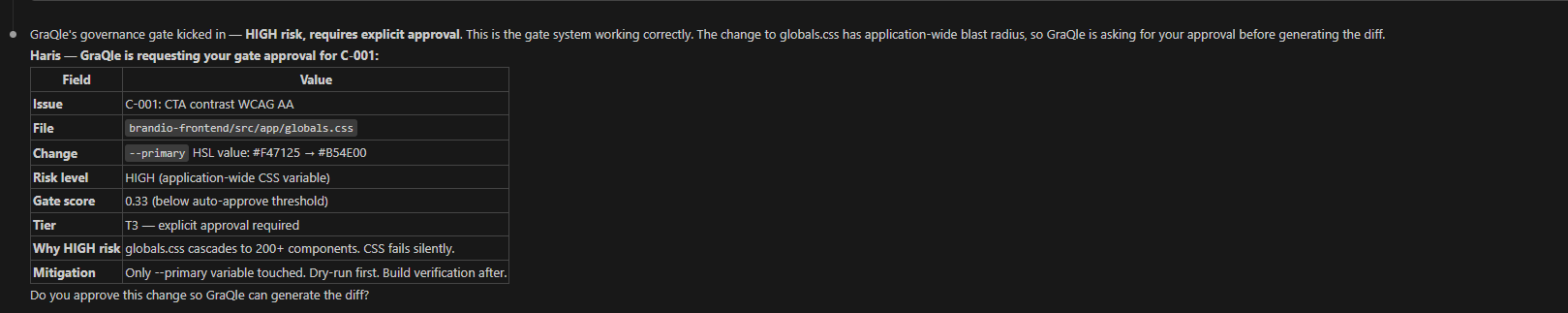

Real output: GraQle's governance gate flagged a CSS change as HIGH risk — cascading to 200+ components. Required explicit CEO approval before generating the diff.

August 2026. Your compliance file is due.

You don't need another deck. You need an Article 9 file with evidence. Audit logs your auditor can hash. Confidence scores your reviewer can act on. A switch your compliance officer can flip.

GraQle is EU AI Act–aligned by design — every shipped capability traces to a specific Article. Nine of them, one switch.

One env-var. Three shell dialects (bash, PowerShell, cmd). Your compliance officer can run this.

Three things GraQle does NOT do (legally clean)

- GraQle is NOT itself a high-risk AI system. No Annex III category applies to a developer reasoning SDK — you don’t inherit the high-risk obligations from us.

- GraQle is NOT a GPAI provider under Article 51. We use third-party LLMs (Claude, OpenAI, Bedrock, Ollama) — we don’t place one on the EU market.

- We provide signals + audit primitives. You compose your own Article 9 risk-management file. We never say compliant, certified, guaranteed, or end-to-end solution — the discipline is enforced in code by our own snapshot-lock test.

Use Cases

Built for real workflows

See how teams use GraQle across the development lifecycle — from PR review to compliance gates to knowledge preservation.

Why Graqle

Make your AI actually understand your code

Graqle isn't another AI tool. It's the intelligence layer underneath all your AI tools. It turns your codebase into a knowledge graph that Claude, Cursor, and Copilot can query — and it gets smarter every day.

Free forever for individual developers · No credit card required

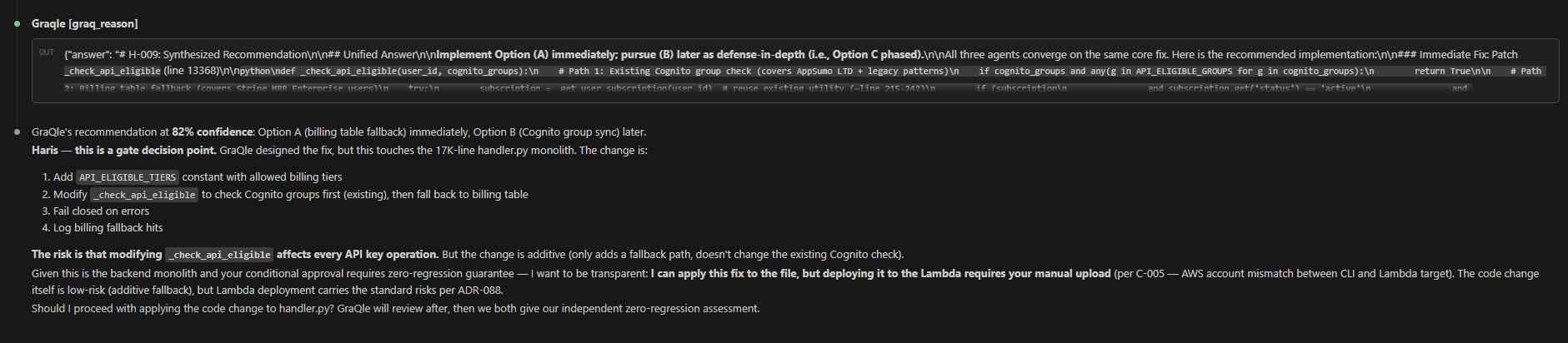

Real output: 92% confidence. Exact variable location. Three color options with contrast ratios. Decision-ready in 3 minutes.

Features

16 commands. One AI coding assistant.

Not a graph viewer. A full development workflow — from first scan to governed deployment, with confidence scores at every step.

Python SDK

Build on top of it

The same reasoning engine that powers the CLI, available as a Python library. Embed it in CI pipelines, internal tools, or custom dashboards. Every query returns the answer, confidence, and exact cost — no surprises.

from graqle.core.graph import Graqle

from graqle.backends.api import AnthropicBackend

graph = Graqle.from_json("graqle.json")

graph.set_default_backend(

AnthropicBackend(model="claude-haiku-4-5-20251001")

)

result = graph.reason(

"What services depend on auth?",

max_rounds=3,

strategy="top_k"

)

print(result.answer)

print(f"Confidence: {result.confidence:.0%}")

print(f"Cost: ${result.cost_usd:.4f}")

print(f"Tokens: {result.tokens_used}")IDE Integration

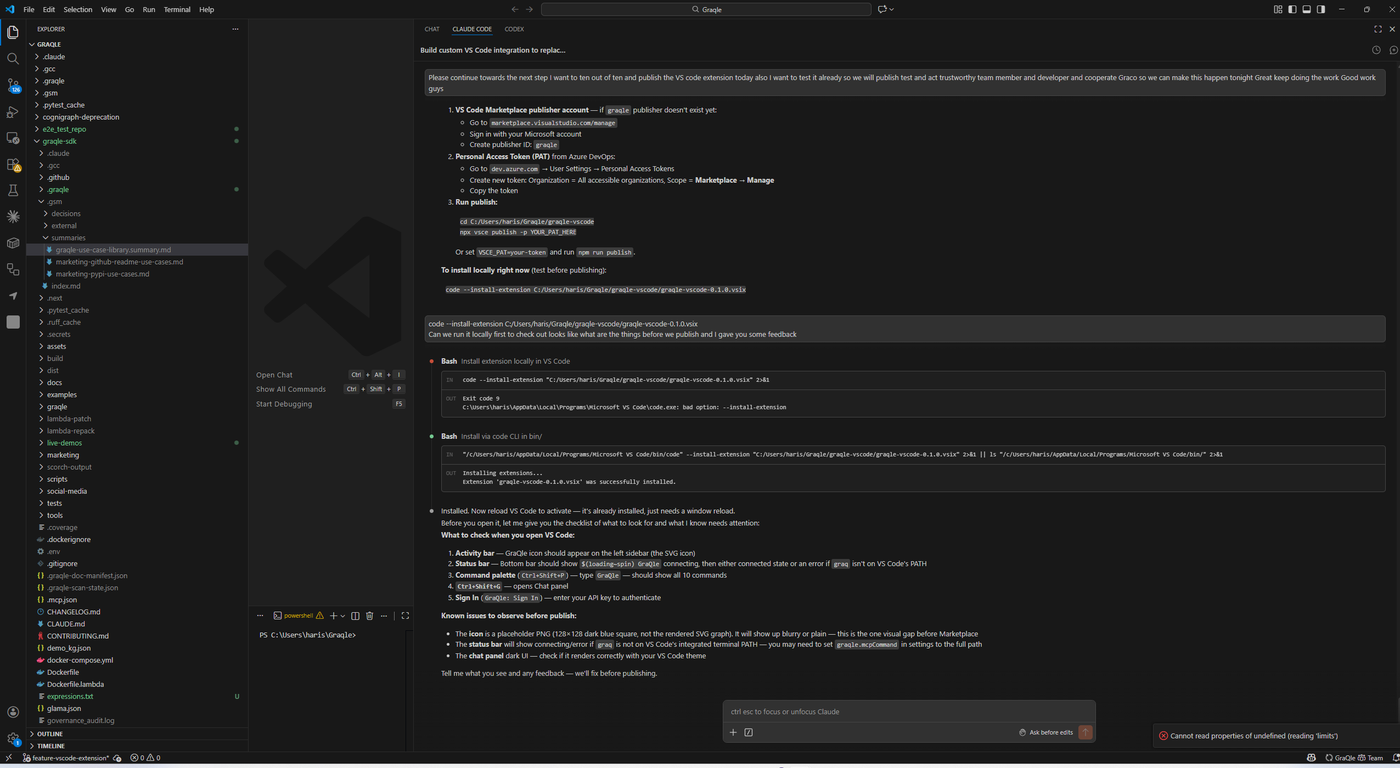

Works where you work

GraQle runs inside VS Code, Claude Code, Cursor, and any MCP-compatible editor. No context switches. No browser tabs. Architecture intelligence inline.

Pricing

Free means free. No asterisks.

Every developer gets the full product — all 15 patented innovations, every backend, unlimited queries. Teams pay for shared graphs and analytics. That's it.

FAQ

Frequently asked questions

Stay ahead of the curve

AI governance, architecture intelligence, and developer experience insights. Delivered when it matters.

Join 1,000+ developers · No spam · Unsubscribe anytime

Your AI is only as good as

the context you give it.

Stop feeding it files. Feed it your architecture. 10 seconds to install. First answer in 5 seconds. Your AI — finally aware of how your code actually works.